Why is Behavior Prediction Important for Autonomous Driving?

Prediction is the ability to accurately reason a driving environment and anticipate the behavior of other road users. This technical blog covers Motional's approach to prediction, and how scaling up our behavior prediction network has been a major part of our vision to develop Large Driving Models (LDMs) that support globally scalable autonomous driving.

Technical Speaking: Omnitag, ML-Powered Multimodal Data Mining Framework

In this blog post, we introduce Omnitag, an ML-Powered Multimodal Data Mining Framework that transforms the "dark matter" of autonomy into refined, ready-to-use fuel for next-generation AVs.

Technical Speaking: Transitioning from Rule-Based to ML-Powered Motion Planning

At Motional, we are pioneering the next evolution of AV technology by transitioning from traditional rule-based planning systems to an end-to-end machine learning (ML) powered motion planning system. This shift allows us to address some of the key limitations of legacy AV architectures and embrace a future where AVs rapidly learn, adapt, and improve with every mile driven.

Technically Speaking: Motional’s Imaging Radar Architecture Paves the Road for Major Improvements

By rethinking system architecture and using machine learning to analyze and process previously discarded low-level radar data, Motional is working on dramatically enhancing radar performance to the point that it rivals the point clouds produced by lidar.

Minds of Motional: Arthur Safira

Arthur Safira, a Senior Engineering Director at Motional, talks about his background, his growth at Motional, and his mission to make a positive, large-scale societal impact with technology.

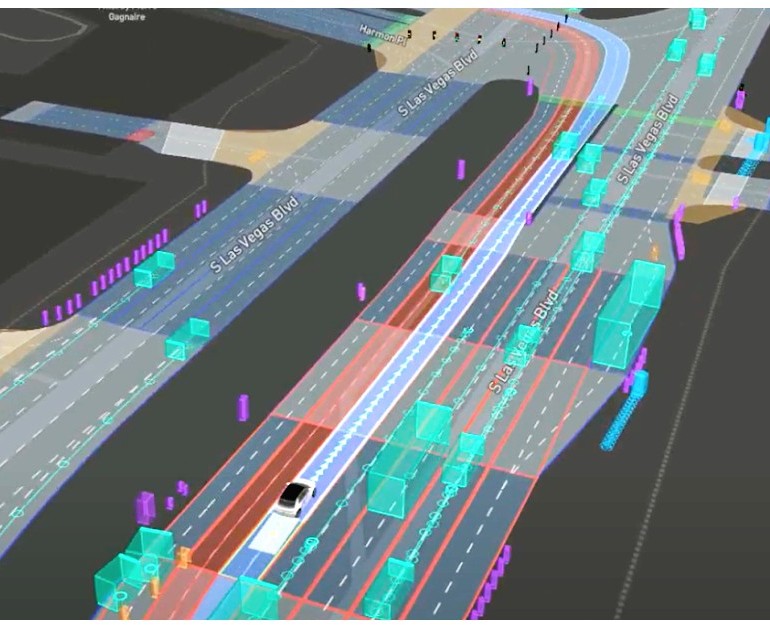

Keeping Focus: Motional’s Robotaxis Block Out Las Vegas Distractions

Motional’s all-electric IONIQ 5 robotaxis are trained to ignore all the lights and sights that make Las Vegas a memorable experience. and instead focus solely on safely navigating through the complex driving environment.

Technically Speaking: Second-Stage Vision Adds Needed Context to Unique Scenarios

Motional has developed a Second-Stage Vision Network that uses machine learning principles to add important context to our object classifications -- additional fine-grain classification then flows downstream improving our perception, prediction, planning, and control substacks.

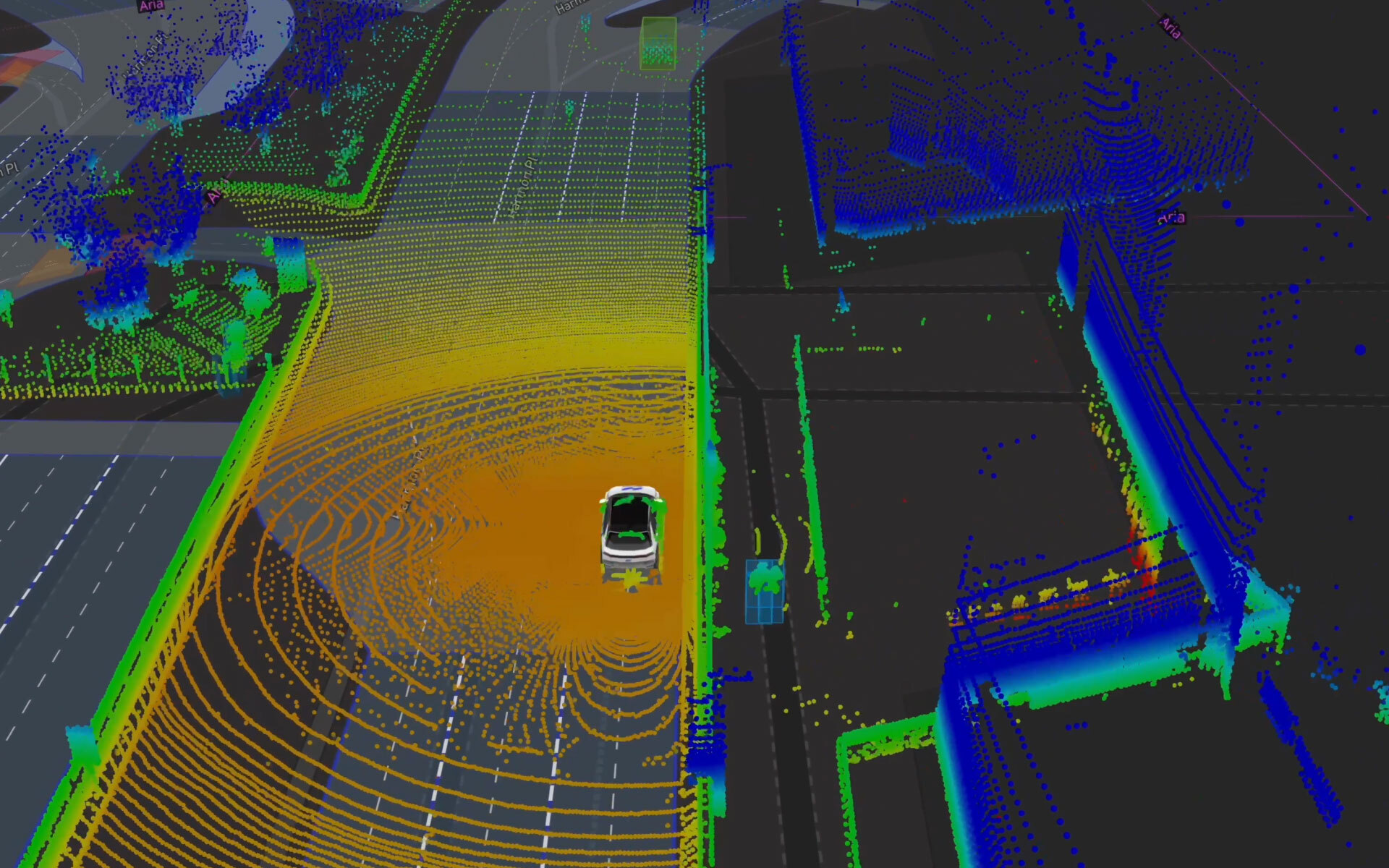

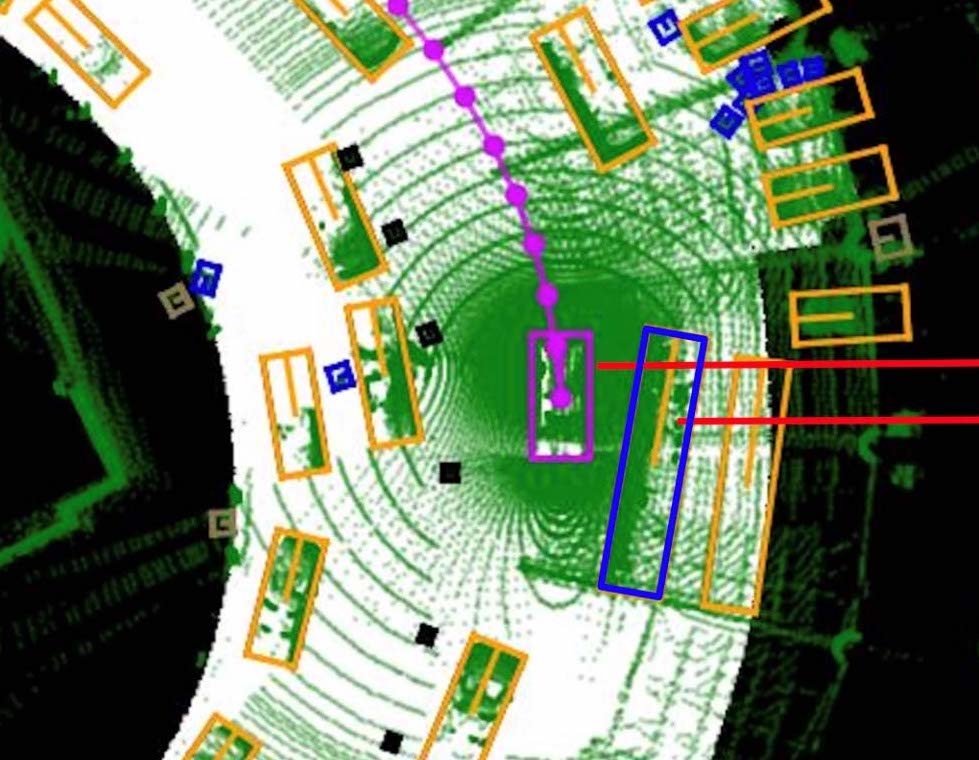

Technically Speaking: Improving Multi-task Agent Behavior Prediction

Motional's PredictNet approach to prediction uses machine learning principles and a multi-task learning architecture to more accurately predict the future behaviors of surrounding agents.

Motional Releases Fourth Annual Consumer Mobility Report, Looking at the Road to Autonomous Vehicle Adoption, Headwinds, and More

The report takes a deep dive into the public perception and understanding of autonomous vehicle (AV) technology, including the headwinds AV companies face from the public, generational perceptions, and factors driving adoption.

Rethinking the Role of Radars as Robotaxis Mature

As AV technology advances, and the global supply chain responds to industry demand, radars could emerge as the central sensors for robotaxis, says Motional's Chief Technology Officer.

DriverlessEd Chapter 7: Outside Your Ride

When developing autonomous vehicles, nothing is more important than safety. The safety of those driving, walking, or biking near our robotaxis is just as important as the safety of the person inside the vehicle. Learn how our vehicles respond to their environment in Chapter 7 of #DriverlessEd.

Technically Speaking: How Continuous Fuzzing Secures Software While Increasing Developer Productivity

Motional uses continuous fuzzing to make sure that our software is as safe and secure as possible before deploying it – or if there is a glitch, that the system can handle it gracefully.

Technically Speaking: Improving AV Perception Through Transformative Machine Learning

Transformer Neural Networks are receiving increased attention about how they can improve AI-driven technology. Our latest Technically Speaking blog explores how Motional has been using Transformers to make our perception function better.

Technically Speaking: Using Machine Learning to Map Roadways Faster

Motional's latest Technically Speaking blog explains how we're using machine learning to speed up the process of mapping public roadways prior to launching commercial passenger service.